Article · April 25, 2026 · 16 min read

When Difficulty Becomes a Design Choice

When Difficulty Becomes a Design Choice

After AGI, the Strange Sadness of Getting What We Want

Imagine opening your laptop in 2029 and seeing a message from your AI assistant: “I finished your work, negotiated your bills, planned your meals, wrote your speech, diagnosed your symptoms, and generated three business ideas while you slept.” At first, this sounds like paradise. For many people, it would be. A great deal of human misery is not noble. It is paperwork, waiting rooms, dangerous labor, medical delay, predatory contracts, and the quiet terror of facing a problem that someone richer could solve in an hour.

Treat 2029 here less as a prophecy than as a stress test. The exact year matters less than the condition. By AGI, I do not mean a conscious machine with a soul. I mean something more practical and perhaps more disruptive: cheap, widely available systems able to perform many economically and culturally significant cognitive tasks at or above competent human level.

Writing. Planning. Tutoring. Coding. Diagnosis. Negotiation. Strategy.

What happens when competent cognitive output becomes ordinary infrastructure?

Not wisdom. Not responsibility. Not judgment. But answers, drafts, plans, explanations, diagnoses, and arguments.

The tax form is done. The insurance claim is handled. The child’s tutor is available at midnight. The legal letter that once cost $800 is drafted in six seconds. The small business owner has a consultant, accountant, designer, and strategist inside one small glowing box.

Humanity’s first emotion may be gratitude.

But relief can curdle into vertigo. If the system can do the work, what exactly is my work? If it can compose the song, write the code, find the argument, pass the test, diagnose the patient, and comfort the grieving, then where do I stand?

This is the strange sadness of getting what we want.

One central question of an AGI age will not be only, “Can the machine remove this difficulty?” Very often, the answer will be yes. The harder question will be, “Should it?”

Once many difficulties become optional, difficulty becomes a design choice. And the moral task will be to tell one kind of difficulty from another.

Which difficulties are cages? Which are scaffolds?

A cage confines a person for the benefit of someone else. A scaffold supports people while they grow strong enough to stand, see, build, and act.

The future will not be humane merely because it is easier. It will be humane only if we abolish cages without tearing down every scaffold. And the freedom to choose a scaffold must not become a luxury reserved for those already protected from hardship.

The Danger Is Not Comfort

There is a bad way to make this argument. It says suffering is good. It tells the poor that poverty builds character, tells the exhausted worker that drudgery is spiritually useful, tells the sick person that pain is a teacher.

That argument should be rejected.

Needless misery should be abolished wherever possible. By needless misery, I mean suffering that humiliates, coerces, excludes, or destroys without building any human capacity. The cancer patient fighting an insurance system is not becoming wiser. The migrant trapped in forms designed to confuse him is not being spiritually improved. The worker risking his body because safety equipment is too expensive is not participating in a sacred rite.

This is not formative difficulty. It is civilization failing at its job.

But not every obstacle is oppression. Some forms of resistance are the conditions under which agency develops. Agency, here, means more than making choices from a menu. It means the capacity to perceive, judge, choose, and act in relation to reality. It means not merely consuming outputs, but becoming the kind of person who can understand consequences.

For much of history, necessity answered many questions before philosophy could ask them. A farmer, a sailor, a weaver, a clerk, a mother — none of them lived in a romantic world. They lived inside hunger, weather, disease, hierarchy, and obligation. Necessity was often cruel. But it was also a harsh author. It gave shape to days. It made certain purposes unavoidable.

Modernity loosened that grip. AGI may loosen it further. The old psychological link between effort and achievement begins to weaken.

Not all meaning comes from struggle. A child’s laughter is not meaningful because it was difficult. Friendship is not valuable only because it is costly. Beauty does not need to be earned. But many forms of pride depend on a felt relation between effort, agency, and consequence.

I tried. I failed. I tried again. I learned. I made something that did not exist before, and the making changed me.

What happens when the result remains, but the inner path disappears?

A student submits an elegant essay, but never struggles through the first bad paragraph. A programmer ships a product, but never learns to debug his own assumptions. A young doctor receives an answer, but never develops responsibility under uncertainty.

The output may be better. The person may be thinner. Thinner in judgment, because judgment grows by making distinctions. Thinner in patience, because patience grows by staying with confusion. Thinner in self-trust, because self-trust grows by acting, failing, and recovering.

This is not literal omnipotence. Humans with AI tools will not become gods. They will still get sick, misunderstand each other, die, and lose their keys.

The danger is the mood of omnipotence: the feeling that the world should answer every command. Many domains of life that answer too easily may begin to feel like a game played in god mode: briefly amusing, then strangely dead.

The old struggle was often inefficient. Sometimes it was also part of the taste.

The Test of Friction

To choose wisely, we need a vocabulary. Call the broad category friction: the resistance between desire and result.

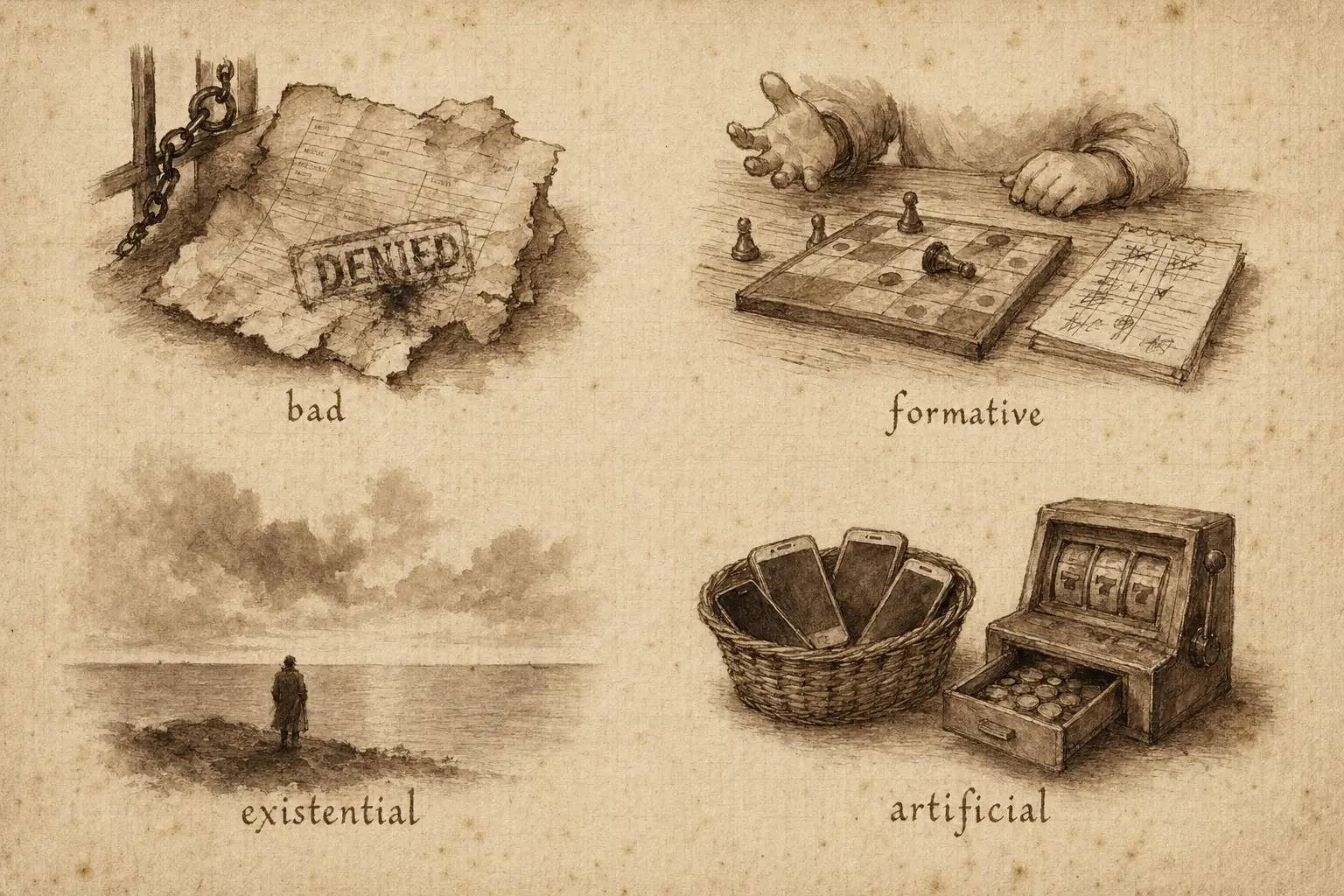

Some friction is bad friction. It is needless misery. It wastes life, enforces power, and calls the damage discipline. AGI should help remove this.

Some friction is formative friction. It is practice, repetition, disagreement, revision, apprenticeship, waiting, listening, and recoverable failure. It is the child learning to lose a game without collapsing. It is the musician practicing scales. It is the scientist being corrected by evidence. It is the citizen discovering that other people do not vanish because one has a better argument.

Some friction is existential. It belongs to limits that cannot be engineered away without changing the human condition: mortality, embodiment, uncertainty, nature, and the stubborn fact that other people are not extensions of our will.

And some friction is artificial. This describes its origin, not its moral value. A school may ban phones during a seminar to deepen attention. A gambling app may slow withdrawals to keep a user trapped. Both are designed obstacles. One may be a scaffold. The other is a cage.

So what makes a difficulty worth preserving? Ask a few plain questions.

- Does this difficulty build capacity, or merely exhaust the person who bears it?

- Is the burden bounded and proportionate?

- Can failure teach without ruining?

- Does the difficulty preserve agency, or does it train obedience?

- Is its purpose transparent? Can it be challenged? Can the person appeal to a responsible human being?

- Is it adapted to disability and circumstance?

- Does it connect a person to reality — to the body, to other people, to consequences, to the world as it is?

And most important: does it serve the development of the person who undergoes it, or merely the convenience, ideology, cost-saving, or moral vanity of the institution imposing it?

A difficulty worth preserving should be capacity-building, bounded, recoverable, agency-preserving, transparent, appealable, and not a substitute for justice.

Not all failure teaches. Some failure just scars.

This is the serious version of antifragility. Muscles strengthen after being torn in small ways, not after being destroyed. Scientific communities improve through criticism when evidence is shared and defeat is survivable. The goal is not to preserve hardship. The goal is to preserve the kinds of resistance that make people capable.

Institutions That Know When Not to Optimize

Cultures are not only machines for producing output. They are systems for forming humans. If that sentence is true, then humane institutions in an AGI age will need a strange discipline. They will have to use powerful tools without surrendering every human process to them.

We have done something like this before.

Industrial cities brought speed, scale, and wealth. They also brought smoke, crowding, noise, and bodies treated as fuel. In response, modern societies built parks, labor laws, public schools, playgrounds, libraries, and weekends. These were not simply nostalgic retreats from industry. They were compensating institutions. They protected human goods that industrial efficiency did not automatically value.

An AGI civilization may need human-practice zones, just as industrial cities needed parks.

This does not mean rejecting AI. Parks were not a rejection of cities. They were a way of making city life survivable.

Human-practice zones are not anti-technology shrines. They are spaces where attention, apprenticeship, embodied skill, interpersonal trust, democratic argument, and human responsibility are deliberately practiced. They must be governed openly. Their limits must be explainable, equitable, revisable, and appealable. Otherwise the language of formation will become a polite cover for control.

Take education.

An AI tutor that helps a child understand algebra at midnight is a gift. So is a system that translates lessons, notices learning gaps, and gives patient explanations to students who never had access to a good teacher.

But a humane school may also preserve formative friction. It might allow AI for explanation and practice, but require first-draft writing without assistance. It might use AI to help a student revise, but ask her to defend her reasoning aloud. It might offer accommodations for disability, make its rules transparent, and keep a human teacher accountable for the child’s development.

The purpose is not to protect the purity of homework. The purpose is to form the mind of the student.

Confusion is not always a defect in education. Sometimes confusion is the doorway. A student needs to discover what it feels like to have a bad idea and improve it. She needs to learn that access to an answer is not the same as understanding. She needs to experience the slow conversion of effort into competence.

Or take work.

Many companies will be tempted to eliminate junior roles. Why hire a young analyst, designer, paralegal, programmer, or researcher when an AI system can produce better first drafts at almost no cost? In the short run, this will look efficient. In the long run, it may destroy the apprenticeship friction by which humans acquire judgment.

No senior doctor began as a senior doctor. No architect was born seeing load-bearing walls. No editor arrived on earth with an ear for sentences. Professions are built through supervised mistakes.

The lesson is not that tools are bad. The lesson is that tools change what humans practice.

A hospital should use AI to read scans and suggest diagnoses. Lives will be saved. But doctors still need embodied clinical practice. They need to notice the anxious pause before a patient answers, the smell of infection, the social reality hidden behind the symptom. Care is not only pattern recognition. It is responsibility under uncertainty.

Families will face the same problem in miniature.

A household robot can wash the dishes. An assistant can plan the schedule. A screen can entertain the child. A model can answer every “why” before the parent has finished breathing.

And still, children may need chores, boredom, cooking, walking, arguing, repairing, and small outdoor risks. Children are not products to be optimized. They are animals learning how to live in a world that will not always serve them.

This will look irrational to the efficiency engineer. Why wash dishes if a robot can do it? Why memorize anything if the assistant remembers? Why write a paragraph if the model writes better?

Because some inefficiency is not waste. It is training. It is ritual. It is participation in ordinary life.

The question is not purity. The question is whether we will remember which human processes should not be optimized away.

The Luxury of Saying No

There is also a political danger hiding inside this cultural one. Preserving friction is only humane if the right to choose it does not become a class privilege.

In an AGI world, the rich may pay for what the poor once endured: silence, slowness, handwork, physical labor, unmediated nature, difficult teachers, real risk, and rooms where no assistant is allowed. This does not mean poverty was secretly good. It means elites may purchase curated difficulty after technology has insulated them from actual precarity.

The affluent may get both AI and the freedom to refuse it. They may send their children to schools with human tutors, forests, debate tables, craft workshops, and strict limits on screens. They may take vacations in places without connectivity. They may buy handmade objects, human therapists, human doctors, human coaches, and human time.

Meanwhile, everyone else may be managed by systems they cannot escape. An AI tutor instead of a teacher. An AI caseworker instead of a social worker. An AI manager, loan officer, landlord, doctor, or judge.

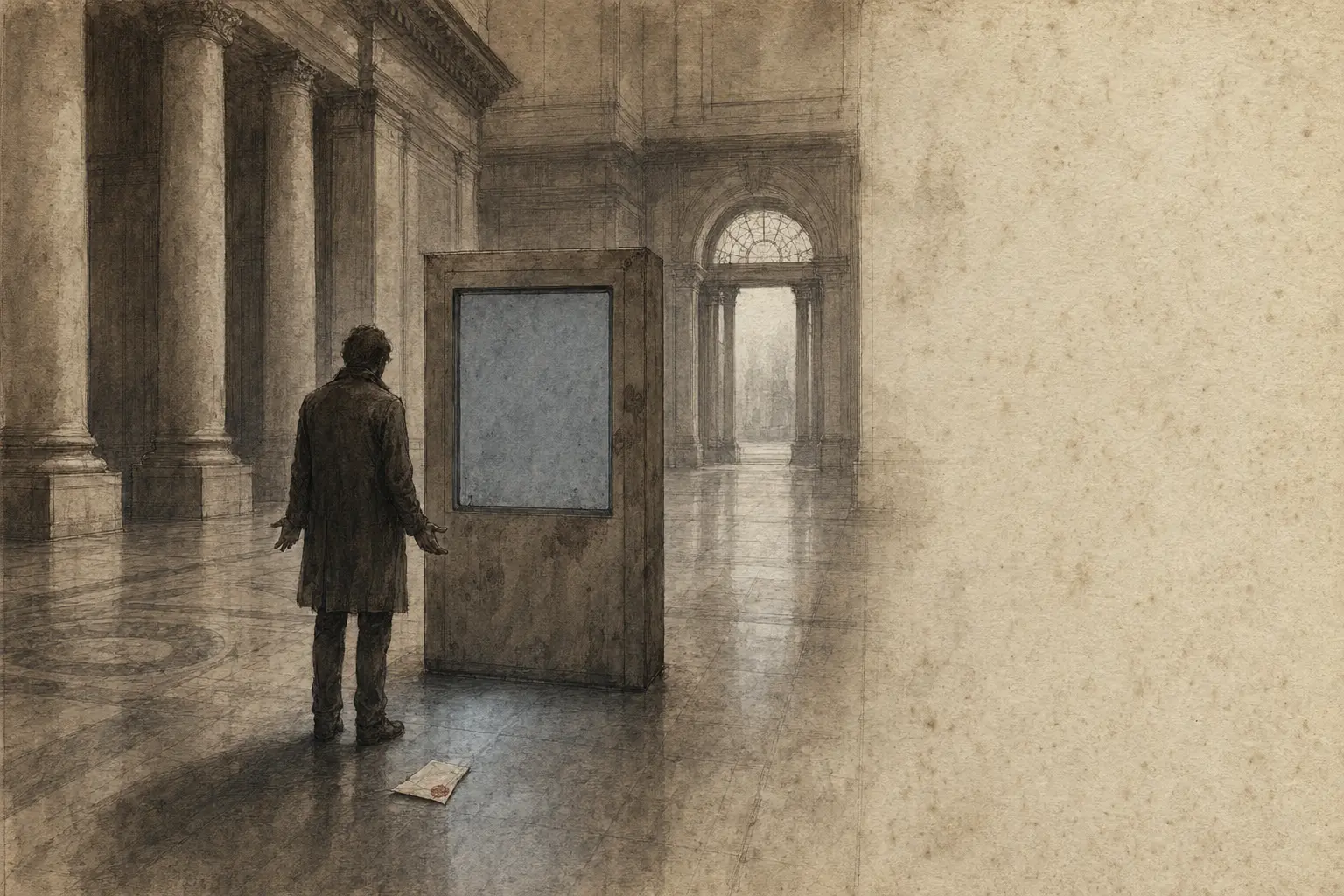

Picture a tenant denied an apartment by an automated risk score. The portal says no. The chatbot explains policy. No one can say which fact mattered, who is responsible, or how to correct the error. The decision is frictionless for the institution and immovable for the person.

Here artificial friction becomes domination.

The problem is not that a machine is involved. The problem is that the person cannot refuse it, question it, understand it, or reach a responsible human being behind it.

Then friction itself becomes a class privilege.

The rich may get chosen limits. The poor may get imposed automation plus unchosen hardship. Some people will choose wilderness. Others will endure broken infrastructure. Some will choose silence. Others will be ignored. Some will choose handwork. Others will perform insecure labor for low pay.

This is why the politics of AGI cannot be only about access to machines. Access matters. A world where only the rich have powerful AI would be unjust and dangerous. But a world where only the rich can refuse AI would also be unjust.

A person denied access to AI may be excluded from power. A person denied access to humans may be trapped inside power.

A humane society should give people both: tools that abolish needless misery, and institutions that preserve meaningful human development. Public schools, libraries, clinics, parks, sports, apprenticeships, arts, civic associations, and human appeal processes may become more important, not less.

The future may divide not only between those who have AI and those who do not, but between those who know when not to use it and those who were never given the choice.

That divide should not become hereditary.

Things That Do Not Respond to Prompts

So far, we have spoken mostly of formative friction: the resistance that trains skill and judgment. But some limits do something older. They teach proportion.

This is existential friction. It is not valuable because it makes us more productive. It is valuable because it interrupts the fantasy that the world is an extension of our will.

AGI may not merely automate tasks. It may habituate us to command and response.

“Make me a company.”

“Make me a film.”

“Make me a cure.”

“Make me loved.”

The machine may not grant all these wishes. But it may make the command feel natural. It may train us to experience reality as a surface waiting for instructions. This is the mood of omnipotence in spiritual form.

The sublime is an old counterweight to this new temptation. The sublime is not prettiness. It is not a pleasant garden or a well-designed app. It is an encounter with reality beyond command. In a world of obedient intelligence, human beings will need contact with things that cannot be personalized, optimized, negotiated with, or prompted.

We will need things that do not respond to prompts. The sea will not generate a customized answer. The mountain will not flatter us. The night sky will not become more user-friendly.

This indifference can sound cruel, but it is often merciful. The world does not revolve around you. At first this wounds the ego. Then it frees it.

There is a deep irony here. AGI may give humanity unprecedented cognitive power, and yet one of our most important therapies may be to remember our smallness.

Not worthlessness.

Smallness.

There is a difference.

To feel worthless is to collapse. To feel small before the cosmos is to be relieved of a burden. One does not have to be the measure of all things. One does not have to control everything. One does not have to turn every moment into a project.

The sublime tells the anxious modern person: your pain is real, but it is not the whole universe. That may become one of the essential sentences of the AGI age.

Post-Omnipotence Humanity

If AGI arrives by 2029, or 2039, or by some slower and stranger path, many societies may pass through several moods. First, amazement: the machine can do what we thought only humans could do. Then disturbance: if the machine can do it, what are humans for? Then selection: which difficulties should we abolish, which should we preserve, which should we redesign, and which should we make available to everyone?

We will need laws, markets, safety systems, labor protections, new institutions, and new forms of accountability. The material questions are real. Who owns the systems? Who benefits from the productivity? Who is displaced? Who is watched? Who can appeal? Who decides?

But beneath these questions lies another one. What kinds of humans do we want our tools to form?

We may try to build a frictionless civilization, where every discomfort is treated as a bug. That path will be seductive. It will promise safety, abundance, convenience, personalization, and endless assistance.

Some of this will be genuine progress. We should not sneer at comfort from a safe distance. For many people, comfort is not decadence. It is rescue.

But a society that systematically removes bounded stress may lose more than inconvenience. It may lose practices of adaptation. A species surrounded by obedient intelligence may need humility more than ever.

We will need assistants, yes. We will need systems that remove drudgery, expand medicine, widen education, and make expertise less scarce. But we will also need apprenticeships, games we can lose, work that tires the body, art that resists easy interpretation, arguments that cannot be auto-completed, classrooms where confusion is allowed, and nights under stars that do not answer back.

Once difficulty becomes optional, difficulty becomes a design choice.

The task is not to worship limits. It is to tell a cage from a scaffold — and to abolish the first without dismantling all of the second.

P.S. After I choose the subject, the text was written in seven iterations with openai-responses:gpt-5.5 and xhigh reasoning effort as writer and critic. The images were generated with OpenAI gpt-image-2. The critics prompt was changed two times.